We get asked, with some regularity, why we are not expanding our university engagement more aggressively. The talent pipeline is thin. The tooling gap in curricula is real. Our open-source infrastructure is a natural fit for classroom use. If we think this is important, why are we working with exactly one school, running exactly one competition, funding exactly one research project?

The short answer: because that is what we can do well right now, and doing more would mean doing it worse. The longer answer is worth spelling out, because the same argument applies to other parts of our growth.

Our team is small. Not as a transitional state, not until we hire more people, but as a deliberate feature of how the company operates. Every initiative we take on consumes a fixed amount of attention from a small number of people. The constraints are not money or ambition; they are calendar time and mental bandwidth of the five to ten engineers and operators who end up touching any given project.

We have observed, over and over, that the difference between a program that moves the needle and one that produces activity without outcomes is whether it has one or two people who own it end to end and have the time to pay real attention. Spread those same two people across five universities instead of one, and you do not get five times the impact. You get five programs that are each one-fifth as likely to produce anything meaningful, because none of them have the continuous attention that turns good intentions into actual outcomes.

This is counterintuitive for people who come from large organizations. In a large company, scaling a program is often about adding more people to the team. One partnership manager per region, ten regions, you are in ten regions. The assumption is that impact scales roughly linearly with headcount allocated.

For small teams, that assumption does not hold. Each new partnership manager does not just add a new partnership; they also need to be supported, communicate with the rest of the team, coordinate with each other, and navigate internal processes that themselves need to exist at that scale. The overhead eats most of the additional capacity. We have seen other small companies try to run five university programs simultaneously, and the consistent result is five weak programs that nobody is happy with.

What we are doing instead. The UTCN partnership, specifically:

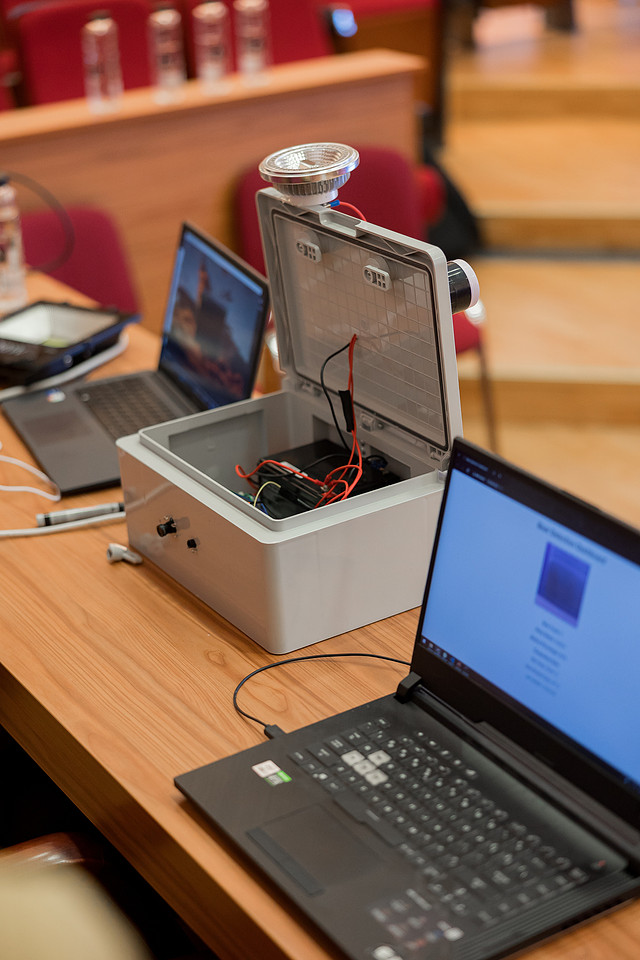

One competition, run once, then run again. The competition happens this year for the first time. We will measure carefully — registrations, completion rate, quality of submissions, participant feedback, what percentage of participants would recommend it, whether any of them ended up building with our tools afterward. We will fix everything that was broken. We will run it a second time with those fixes. Only then — after two completed iterations in one location, with real data — will we consider whether to run it elsewhere. That puts the earliest possible second-location date somewhere in late 2026 or early 2027, and we think that is the right pace.

One research grant, focused on a problem we actually care about. We picked a topic — memory consolidation in long-running agents — that maps directly to a technical problem we are working on internally. If the grant produces useful results, we adopt them. If it does not, we learn something. Either outcome is productive. Compare this to the pattern of giving out ten small grants across many areas, which produces a pile of low-engagement papers and nothing any specific team at any specific company can use.

One relationship with the university, nurtured over multiple years. Our partnership with the Faculty of Automation and Computer Science at UTCN is not a one-off check. It is a multi-year arrangement where we show up in person, review student work, send engineers to give guest lectures, and treat the faculty we work with as peers rather than counterparties. That takes real time. We can have one such relationship right now. With an order of magnitude more staff, we could have two or three. We cannot, today, fake that depth at ten schools, and we would rather have the real depth at one school than the shallow version at ten.

What we will not do in 2026.

Partner with additional universities. No matter how interesting the opportunity, no matter how well-aligned the faculty, the answer this year is no. We have told several universities the same thing, with an explanation, and all of them have been gracious about it.

Build a portal or an app for student engagement. A recurring suggestion is that we build infrastructure for scaling university programs — an application portal, a mentor matching system, a cohort management tool. We are not going to do that. Until we know what the program looks like at scale, building infrastructure for scale is premature optimization.

Hire a dedicated university-relations person. The initiative lives inside engineering leadership for now. When it outgrows that — which will happen if and when we run more than two or three events a year — we will revisit the staffing.

Make public commitments about future expansion. We have seen the cost of promising things you cannot deliver. We would rather under-commit and over-deliver than the reverse. If in two years we are running events at five universities, that will be because the first two worked, not because we promised it now.

The honest case for going slow. It is less exciting. It does not make for good announcements. It does not compound the way people hope initiatives will compound. But it is more likely to produce something real. One university where our presence actually matters to the students there is worth more than ten universities where we are one of many logos on a sponsor slide.

The UTCN competition is happening this year. The research grant is active. We will report honestly on both. If either does not work out the way we hoped, the next post in this space will say so. If they do work out, we will continue doing them, and only then — with real learning — consider what to do next.